Content Moderation

Last updated: April 27, 2026

Content Moderation helps brands monitor affiliate creator content for compliance with brand safety guidelines. It automatically scans videos and flags content containing unwanted language, unapproved claims, or visual elements that don't meet your standards.

Common use cases include:

Removing prohibited content from GMV Max

Sending violating creators a warning message

Checking for brand overlays (e.g. Walmart Partner) before adding content to GMV Max

Video Demo

https://www.loom.com/share/4b33752f9b1046fc8824fd64e867f845

What You Can Monitor

Transcript Keywords

For example, scan for required terms like brand name or disclaimers, or flag prohibited terms like "discount," "cheapest," or unapproved claims

On-Screen Text Keywords

Detect unauthorized messaging in video text overlays, or rnsure required disclaimers appear on screen

Visual Compliance

Define rules in plain language for AI analysis

Examples: "No children under 13" or "Product must not be shown being consumed directly from packaging"

How to Use

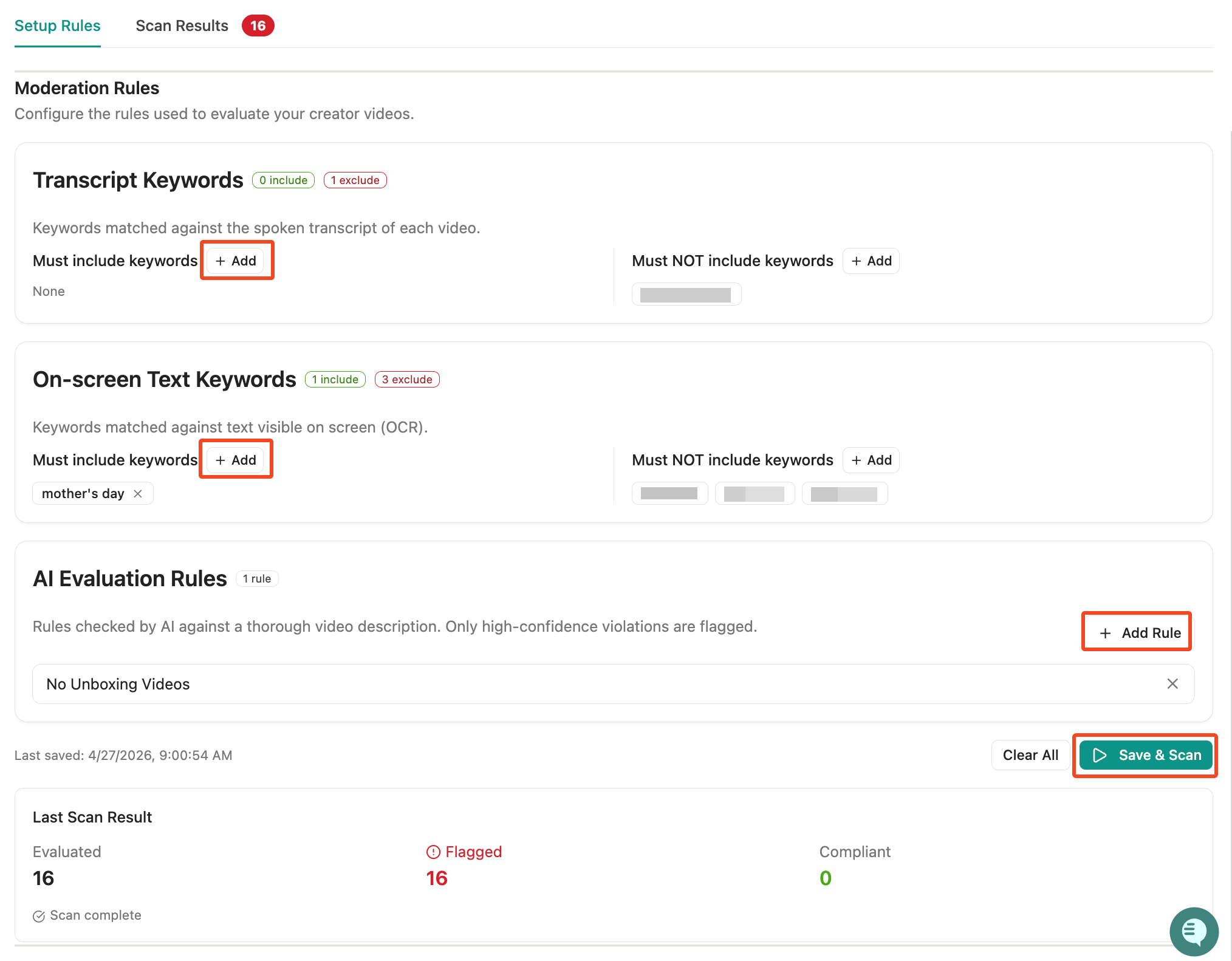

Step 1: Set Up Rules

Go to My Brand → Content Moderation → Manage Rule

Add keywords that must appear in transcripts or on-screen text

Add keywords that must NOT appear

Define any visual compliance rules

Step 2: Run a Scan

Go to the Set Up Moderation tab

Review your current rules

Click Start Scan

Monitor progress as videos are processed

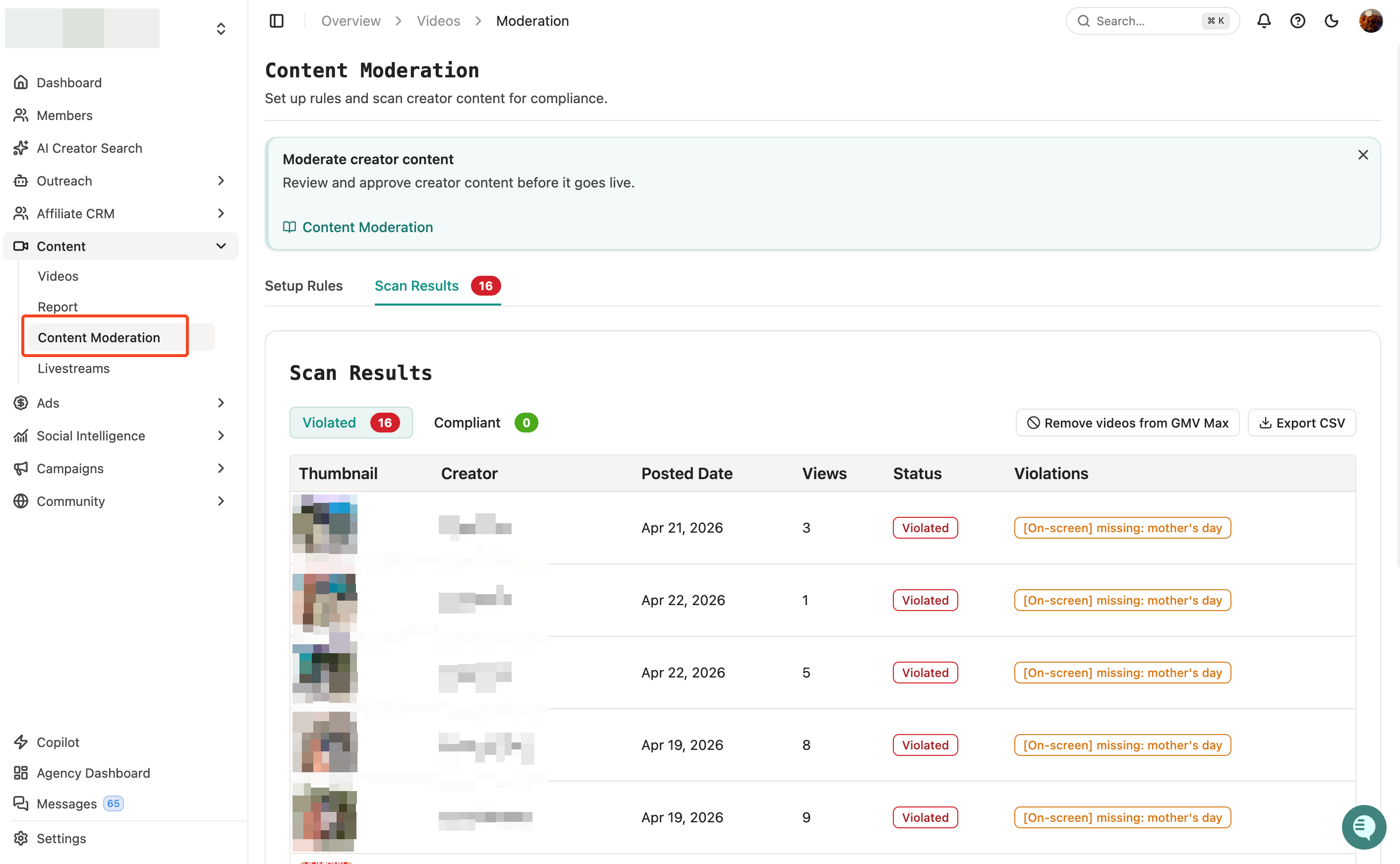

Step 3: Review Results

Go to the Scan Results tab

Violated – Videos that failed your rules

Compliant – Videos that passed all rules

Awaiting Data – Videos still being processed

For each flagged video, you'll see exactly what triggered the violation (e.g., "[Transcript] found: discount").

Step 4: Take Action

Export CSV – Download results for review

Remove from GMV Max – Prevent non-compliant videos from being boosted

Message creators – Reach out about guideline violations

Collect Spark Codes – Request authorization from compliant creators